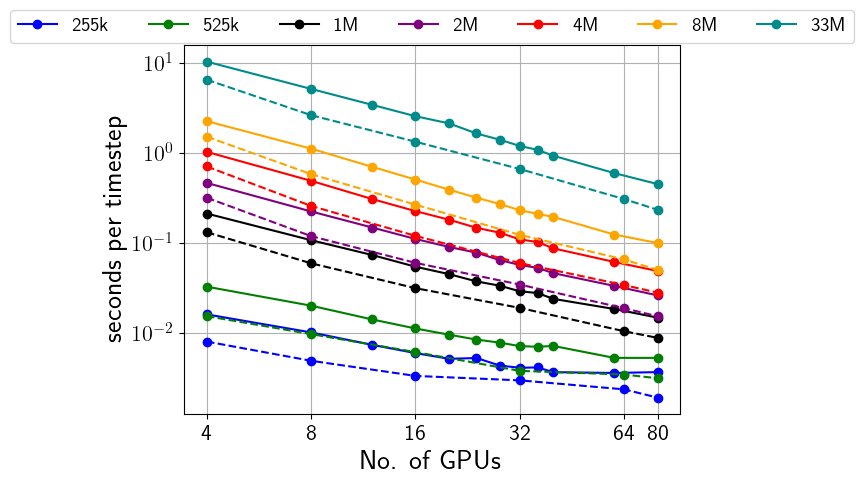

We are excited to announce the successful completion of Milestone 5 in the MultiXscale project: “WP4 Pre-exascale workload executed on EuroHPC architecture”. This marks a significant achievement in our ongoing efforts to push the boundaries of computational fluid dynamics. In this milestone, we performed a comprehensive strong scalability study of a DPD fluid in equilibrium using the open boundary molecular dynamics plugin (OBMD) (solid lines), and compared it to simulations without OBMD (dashed lines), where periodic boundary conditions were applied.

One of the standout results from our study was that the inclusion of OBMD did not impact the strong scalability of the LAMMPS code, except for a constant prefactor. This means that while OBMD introduces a small fixed computational overhead, it does not affect the efficiency or the scaling behavior of the code as we increase the computational resources. We observed excellent strong scalability up to at least 80 A100 GPUs, which represents about one-third of the EuroHPC Vega GPU partition.

To put this into perspective, each A100 GPU delivers approximately 10 TFlops in double precision and 20 TFlops in single precision. This means that the 80 GPU workload we tested is running on hardware with between 0.75 and 1.5 Petaflops raw compute capabilities, highlighting the immense computational power being utilized. Such scalability is crucial for large-scale simulations, as it ensures our methods remain efficient even when deployed on advanced supercomputing infrastructures.

The strong scalability of our simulations is vital for researchers aiming to model complex fluid dynamics at large scales. By confirming that OBMD scales effectively alongside LAMMPS, we can confidently apply this method to larger, more detailed simulations without worrying about performance degradation. This opens up new possibilities for highly accurate simulations in fields such as materials science, biophysics, and chemical engineering.

With Milestone 5 now complete, we are moving closer to our overarching goal of enabling multi-scale simulations that leverage the power of modern supercomputing resources. This achievement highlights the robustness and efficiency of our OBMD approach, and we are excited to continue exploring its applications in even more complex scenarios.